Score Jacobian Chaining (SJC) によるテキストからの3D生成を試す

「Score Jacobian Chaining」(SJC)によるテキストからの3D生成を試したのでまとめました。

「Colab Pro / Pro+」のプレミアで試しています。学習に30分ほどかかりました。

前回

1. Score Jacobian Chaining (SJC)

「Score Jacobian Chaining」(SJC)は、テキストから3Dを生成する手法です。3Dデータへを必要とせずに、画像の事前学習済み2D 拡散生成モデルを放射輝度フィールドの3D生成モデルに変換します。

2. sjcのインストール

Colabへの「SJC」のインストール方法は、次のとおりです。公式ノートブックをベースに、Colabで実行できるよう手直ししてます。

(1) メニュー「編集→ノートブックの設定」で、「ハードウェアアクセラレータ」に「GPU」を選択。

自分は高速化のために「Colab Pro」の「プレミア」を使いました。

(2) GPUの確認。

# GPUの確認

!nvidia-smi+-----------------------------------------------------------------------------+

| NVIDIA-SMI 460.32.03 Driver Version: 460.32.03 CUDA Version: 11.2 |

|-------------------------------+----------------------+----------------------+

| GPU Name Persistence-M| Bus-Id Disp.A | Volatile Uncorr. ECC |

| Fan Temp Perf Pwr:Usage/Cap| Memory-Usage | GPU-Util Compute M. |

| | | MIG M. |

|===============================+======================+======================|

| 0 A100-SXM4-40GB Off | 00000000:00:04.0 Off | 0 |

| N/A 42C P0 44W / 400W | 0MiB / 40536MiB | 0% Default |

| | | Disabled |

+-------------------------------+----------------------+----------------------+(3) Googleドライブのマウント。

from google.colab import drive

drive.mount('/content/drive')(3) 「sjc」パッケージをインストールして、「sjc」フォルダに移動。

import os

if not os.path.exists('/content/drive/MyDrive/sjc'):

print('Installing from scratch...')

%cd /content/drive/MyDrive/

!git clone https://github.com/pals-ttic/sjc/

%cd sjc

else:

print('repo already exists in Drive...')

%cd /content/drive/MyDrive/sjc

!pip install pytorch_lightning -U

!git clone --depth 1 https://github.com/CompVis/taming-transformers.git && pip install -e taming-transformers

!pip install -r requirements.txt

!pip install imageio-ffmpeg

print('Continue to next cell.')(4) Stable Diffusionの依存関係のインストール。

# Stable Diffusionの依存関係のインストール

!pip install -e git+https://github.com/CompVis/taming-transformers.git@master#egg=taming-transformers

!pip install pytorch_lightning tensorboard==2.8 omegaconf einops taming-transformers==0.0.1 clip transformers kornia test-tube

!pip install diffusers invisible-watermark

!pip install pytorch-lightning==1.8.3.post0(5) アセットのダウンロード。

# アセットのダウンロード

if not os.path.exists('/content/drive/MyDrive/sjc/release'):

print('Downloading checkpoints. This will take a while...')

!wget https://dl.ttic.edu/pals/sjc/release.tar

!tar -xvf /content/drive/MyDrive/sjc/release.tar

else:

print('checkpoints already downloaded')(6) env.jsonのアップデート。

%%writefile env.json

{

"data_root": "/content/drive/MyDrive/sjc/release"

}(7) adapt_sd.pyのアップデート。

%%writefile adapt_sd.py

import sys

from pathlib import Path

import torch

import numpy as np

from omegaconf import OmegaConf

from einops import rearrange

from contextlib import nullcontext

from math import sqrt

from adapt import ScoreAdapter

import warnings

from transformers import logging

warnings.filterwarnings("ignore", category=DeprecationWarning)

logging.set_verbosity_error()

device = torch.device("cuda")

def curr_dir():

return Path(__file__).resolve().parent

def add_import_path(dirname):

sys.path.append(str(

curr_dir() / str(dirname)

))

def load_model_from_config(config, ckpt, verbose=False):

from ldm.util import instantiate_from_config

print(f"Loading model from {ckpt}")

pl_sd = torch.load(ckpt, map_location="cpu")

if "global_step" in pl_sd:

print(f"Global Step: {pl_sd['global_step']}")

sd = pl_sd["state_dict"]

model = instantiate_from_config(config.model)

m, u = model.load_state_dict(sd, strict=False)

if len(m) > 0 and verbose:

print("missing keys:")

print(m)

if len(u) > 0 and verbose:

print("unexpected keys:")

print(u)

model.to(device)

model.eval()

return model

def load_sd1_model(ckpt_root):

ckpt_fname = ckpt_root / "stable_diffusion" / "sd-v1-5.ckpt"

cfg_fname = curr_dir() / "sd1" / "configs" / "v1-inference.yaml"

H, W = 512, 512

config = OmegaConf.load(str(cfg_fname))

model = load_model_from_config(config, str(ckpt_fname))

return model, H, W

def load_sd2_model(ckpt_root, v2_highres):

if v2_highres:

ckpt_fname = ckpt_root / "sd2" / "768-v-ema.ckpt"

cfg_fname = curr_dir() / "sd2/configs/stable-diffusion/v2-inference-v.yaml"

H, W = 768, 768

else:

ckpt_fname = ckpt_root / "sd2" / "512-base-ema.ckpt"

cfg_fname = curr_dir() / "sd2/configs/stable-diffusion/v2-inference.yaml"

H, W = 512, 512

config = OmegaConf.load(f"{cfg_fname}")

model = load_model_from_config(config, str(ckpt_fname))

return model, H, W

def _sqrt(x):

if isinstance(x, float):

return sqrt(x)

else:

assert isinstance(x, torch.Tensor)

return torch.sqrt(x)

class StableDiffusion(ScoreAdapter):

def __init__(self, variant, v2_highres, prompt, scale, precision):

if variant == "v1":

add_import_path("sd1")

self.model, H, W = load_sd1_model(self.checkpoint_root())

elif variant == "v2":

add_import_path("sd2")

self.model, H, W = load_sd2_model(self.checkpoint_root(), v2_highres)

else:

raise ValueError(f"{variant}")

ae_resolution_f = 8

self._device = self.model._device

self.prompt = prompt

self.scale = scale

self.precision = precision

self.precision_scope = autocast if self.precision == True else nullcontext

self._data_shape = (4, H // ae_resolution_f, W // ae_resolution_f)

self.cond_func = self.model.get_learned_conditioning

self.M = 1000

noise_schedule = "linear"

self.noise_schedule = noise_schedule

self.us = self.linear_us(self.M)

def data_shape(self):

return self._data_shape

@property

def σ_max(self):

return self.us[0]

@property

def σ_min(self):

return self.us[-1]

@torch.no_grad()

def denoise(self, xs, σ, **model_kwargs):

with self.precision_scope("cuda"):

with self.model.ema_scope():

N = xs.shape[0]

c = model_kwargs.pop('c')

uc = model_kwargs.pop('uc')

cond_t, σ = self.time_cond_vec(N, σ)

unscaled_xs = xs

xs = xs / _sqrt(1 + σ**2)

if uc is None or self.scale == 1.:

output = self.model.apply_model(xs, cond_t, c)

else:

x_in = torch.cat([xs] * 2)

t_in = torch.cat([cond_t] * 2)

c_in = torch.cat([uc, c])

e_t_uncond, e_t = self.model.apply_model(x_in, t_in, c_in).chunk(2)

output = e_t_uncond + self.scale * (e_t - e_t_uncond)

if self.model.parameterization == "v":

output = self.model.predict_eps_from_z_and_v(xs, cond_t, output)

else:

output = output

Ds = unscaled_xs - σ * output

return Ds

def cond_info(self, batch_size):

prompts = batch_size * [self.prompt]

return self.prompts_emb(prompts)

@torch.no_grad()

def prompts_emb(self, prompts):

assert isinstance(prompts, list)

batch_size = len(prompts)

with self.precision_scope("cuda"):

with self.model.ema_scope():

cond = {}

c = self.cond_func(prompts)

cond['c'] = c

uc = None

if self.scale != 1.0:

uc = self.cond_func(batch_size * [""])

cond['uc'] = uc

return cond

def unet_is_cond(self):

return True

def use_cls_guidance(self):

return False

def snap_t_to_nearest_tick(self, t):

j = np.abs(t - self.us).argmin()

return self.us[j], j

def time_cond_vec(self, N, σ):

if isinstance(σ, float):

σ, j = self.snap_t_to_nearest_tick(σ) # σ might change due to snapping

cond_t = (self.M - 1) - j

cond_t = torch.tensor([cond_t] * N, device=self.device)

return cond_t, σ

else:

assert isinstance(σ, torch.Tensor)

σ = σ.reshape(-1).cpu().numpy()

σs = []

js = []

for elem in σ:

_σ, _j = self.snap_t_to_nearest_tick(elem)

σs.append(_σ)

js.append((self.M - 1) - _j)

cond_t = torch.tensor(js, device=self.device)

σs = torch.tensor(σs, device=self.device, dtype=torch.float32).reshape(-1, 1, 1, 1)

return cond_t, σs

@staticmethod

def linear_us(M=1000):

assert M == 1000

β_start = 0.00085

β_end = 0.0120

βs = np.linspace(β_start**0.5, β_end**0.5, M, dtype=np.float64)**2

αs = np.cumprod(1 - βs)

us = np.sqrt((1 - αs) / αs)

us = us[::-1]

return us

@torch.no_grad()

def encode(self, xs):

model = self.model

with self.precision_scope("cuda"):

with self.model.ema_scope():

zs = model.get_first_stage_encoding(

model.encode_first_stage(xs)

)

return zs

@torch.no_grad()

def decode(self, xs):

with self.precision_scope("cuda"):

with self.model.ema_scope():

xs = self.model.decode_first_stage(xs)

return xs

def test():

sd = StableDiffusion("v2", True, "haha", 10.0, True)

print(sd)

if __name__ == "__main__":

test()(8) 作業用にexpフォルダを作成し、expフォルダに移動。

# 作業フォルダの作成

if not os.path.exists('exp'):

!mkdir exp

%cd exp3. 3Dモデルの生成の実行

テキストからの3D生成の実行手順は、次のとおりです。

(1) テキストからの3D生成を実行。

# テキストからの3D生成

!python ../run_sjc.py \

--sd.prompt "Biden figure" \

--n_steps 10000 \

--lr 0.05 \

--sd.scale 100.0 \

--emptiness_weight 10000 \

--emptiness_step 0.5 \

--emptiness_multiplier 20.0 \

--depth_weight 0パラメータの説明は、次のとおり。

・sd.prompt : プロンプト

・n_steps : ステップ数

・lr : 学習率

・sd.scale : Stable Diffusionのガイダンススケール

・emptiness_weight : 虚無損失の重み付け係数

・emptiness_step : emptiness_step * n_steps の更新ステップの後に emptiness_weight に emptiness_multiplier を乗算

・emptiness_multipler : 同上

・depth_weight : 中心深度損失の重み付け係数

・var_red : 式16と式15のどちらを使用するか

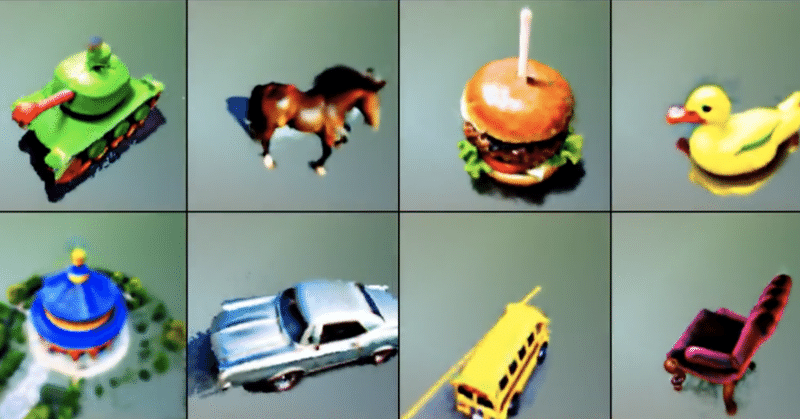

以下のような3Dモデルが生成されました。

sjcで人型の3D生成を試す。Colab Pro(A100)で10000ステップで28分。 pic.twitter.com/DShQfBNBg5

— 布留川英一 / Hidekazu Furukawa (@npaka123) December 4, 2022

この記事が気に入ったらサポートをしてみませんか?