Stable Zero123をWin10(RTX3090)で動かす

タイトル通りです。丸一日潰したので雑まとめをば。

これで本当にあっているかは自信ないです。試行錯誤しすぎてとっちらかっているので。

前提環境

CUDA:11.8

cuDNN:v8.9.7 (December 5th, 2023), for CUDA 11.x

(もしかしたら不要かもだけど念のため)

python:3.10.11

Build Tools for Visual Studio 2022

(インストール方法:https://www.kkaneko.jp/tools/win/buildtool2022.html)

事前にやっておくこと

"C:\Program Files\NVIDIA GPU Computing Toolkit\CUDA\v11.8\extras\visual_studio_integration\MSBuildExtensions"

CUDA 11.8.props

CUDA 11.8.targets

CUDA 11.8.xml

Nvda.Build.CudaTasks.v11.8.dll

を

"C:\Program Files (x86)\Microsoft Visual Studio\2022\BuildTools\MSBuild\Microsoft\VC\v170\BuildCustomizations"

次のレジストリキーに CUDA フォルダの値を設定。

HKEY_LOCAL_MACHINE\SOFTWARE\NVIDIA Corporation\GPU Computing Toolkit\CUDA\v11.8

InstallDir REG_SZ (文字列) C:\Program Files\NVIDIA GPU Computing Toolkit\CUDA\v11.8

ユーザー環境変数のPATHに以下を登録

C:\Program Files\NVIDIA GPU Computing Toolkit\CUDA\v11.8\bin

C:\Program Files\NVIDIA GPU Computing Toolkit\CUDA\v11.8\libnvvp

C:\Program Files\CMake\bin

インストール手順

cd C:\

git clone https://github.com/threestudio-project/threestudio

cd C:\threestudio

python -m venv venv

.\venv\Scripts\activate

python -m pip install pip==23.0.1

pip install ninjarequirements.txtの以下のように改変

git+https://github.com/KAIR-BAIR/nerfacc.git@v0.5.2

git+https://github.com/NVlabs/tiny-cuda-nn/#subdirectory=bindings/torch

↓

git+https://github.com/KAIR-BAIR/nerfacc.git@v0.5.2

git+https://github.com/NVlabs/tiny-cuda-nn/#subdirectory=bindings/torch

xformers

↓

xformers==0.0.19

pip install -r requirements.txt

pip install torch==2.0.0 torchvision==0.15.1 --index-url https://download.pytorch.org/whl/cu118

curl -L -o nerfacc-0.5.2+pt20cu118-cp310-cp310-win_amd64.whl https://nerfacc-bucket.s3.us-west-2.amazonaws.com/whl/torch-2.0.0_cu118/nerfacc-0.5.2%2Bpt20cu118-cp310-cp310-win_amd64.whl

pip install nerfacc-0.5.2+pt20cu118-cp310-cp310-win_amd64.whl管理者権限でコマンドプロンプトを起動しなおし以下を実行

cd C:\threestudio

.\venv\Scripts\activate

git clone --recursive https://github.com/NVlabs/tiny-cuda-nn

cd tiny-cuda-nn

cmake . -B build -DCMAKE_BUILD_TYPE=RelWithDebInfo

cmake --build build --config RelWithDebInfo -j

cd bindings\torch

python .\setup.py installstable_zero123.ckptを"C:\threestudio\load\zero123\stable_zero123.ckpt"になるようにDL

https://huggingface.co/stabilityai/stable-zero123

RTX3090仕様に設定

追記:デフォルト設定で動きました

"C:\threestudio\configs\stable-zero123.yaml"

を以下のように設定

name: "zero123-sai"

tag: "${data.random_camera.height}_${rmspace:${basename:${data.image_path}},_}"

exp_root_dir: "outputs"

seed: 0

data_type: "single-image-datamodule"

data: # threestudio/data/image.py -> SingleImageDataModuleConfig

image_path: ./load/images/hamburger_rgba.png

height: [128, 256, 256]

width: [128, 256, 256]

resolution_milestones: [200, 300]

default_elevation_deg: 5.0

default_azimuth_deg: 0.0

default_camera_distance: 3.8

default_fovy_deg: 20.0

requires_depth: ${cmaxgt0orcmaxgt0:${system.loss.lambda_depth},${system.loss.lambda_depth_rel}}

requires_normal: ${cmaxgt0:${system.loss.lambda_normal}}

random_camera: # threestudio/data/uncond.py -> RandomCameraDataModuleConfig

height: [64, 128, 256]

width: [64, 128, 256]

batch_size: [1, 1, 1]

resolution_milestones: [200, 300]

eval_height: 256

eval_width: 256

eval_batch_size: 1

elevation_range: [-10, 80]

azimuth_range: [-180, 180]

camera_distance_range: [3.8, 3.8]

fovy_range: [20.0, 20.0] # Zero123 has fixed fovy

progressive_until: 0

camera_perturb: 0.0

center_perturb: 0.0

up_perturb: 0.0

light_position_perturb: 1.0

light_distance_range: [7.5, 10.0]

eval_elevation_deg: ${data.default_elevation_deg}

eval_camera_distance: ${data.default_camera_distance}

eval_fovy_deg: ${data.default_fovy_deg}

light_sample_strategy: "dreamfusion"

batch_uniform_azimuth: False

n_val_views: 30

n_test_views: 120

system_type: "zero123-system"

system:

geometry_type: "implicit-volume"

geometry:

radius: 2.0

normal_type: "analytic"

# use Magic3D density initialization instead

density_bias: "blob_magic3d"

density_activation: softplus

density_blob_scale: 10.

density_blob_std: 0.5

# coarse to fine hash grid encoding

# to ensure smooth analytic normals

pos_encoding_config:

otype: HashGrid

n_levels: 16

n_features_per_level: 2

log2_hashmap_size: 19

base_resolution: 16

per_level_scale: 1.447269237440378 # max resolution 4096

mlp_network_config:

otype: "VanillaMLP"

activation: "ReLU"

output_activation: "none"

n_neurons: 64

n_hidden_layers: 2

material_type: "diffuse-with-point-light-material"

material:

ambient_only_steps: 100000

textureless_prob: 0.05

albedo_activation: sigmoid

background_type: "solid-color-background" # unused

renderer_type: "nerf-volume-renderer"

renderer:

radius: ${system.geometry.radius}

num_samples_per_ray: 128

return_comp_normal: ${cmaxgt0:${system.loss.lambda_normal_smooth}}

return_normal_perturb: ${cmaxgt0:${system.loss.lambda_3d_normal_smooth}}

prompt_processor_type: "dummy-prompt-processor" # Zero123 doesn't use prompts

prompt_processor:

pretrained_model_name_or_path: ""

prompt: ""

guidance_type: "stable-zero123-guidance"

guidance:

pretrained_config: "./load/zero123/sd-objaverse-finetune-c_concat-256.yaml"

pretrained_model_name_or_path: "./load/zero123/stable_zero123.ckpt"

vram_O: ${not:${gt0:${system.freq.guidance_eval}}}

cond_image_path: ${data.image_path}

cond_elevation_deg: ${data.default_elevation_deg}

cond_azimuth_deg: ${data.default_azimuth_deg}

cond_camera_distance: ${data.default_camera_distance}

guidance_scale: 3.0

min_step_percent: [50, 0.7, 0.3, 200] # (start_iter, start_val, end_val, end_iter)

max_step_percent: [50, 0.98, 0.8, 200]

freq:

ref_only_steps: 0

guidance_eval: 0

loggers:

wandb:

enable: false

project: "threestudio"

name: None

loss:

lambda_sds: 0.1

lambda_rgb: [100, 500., 1000., 400]

lambda_mask: 50.

lambda_depth: 0. # 0.05

lambda_depth_rel: 0. # [0, 0, 0.05, 100]

lambda_normal: 0. # [0, 0, 0.05, 100]

lambda_normal_smooth: [100, 7.0, 5.0, 150, 10.0, 200]

lambda_3d_normal_smooth: [100, 7.0, 5.0, 150, 10.0, 200]

lambda_orient: 1.0

lambda_sparsity: 0.5 # should be tweaked for every model

lambda_opaque: 0.5

optimizer:

name: Adam

args:

lr: 0.01

betas: [0.9, 0.99]

eps: 1.e-8

trainer:

max_steps: 600

log_every_n_steps: 1

num_sanity_val_steps: 0

val_check_interval: 100

enable_progress_bar: true

precision: 16

checkpoint:

save_last: true # save at each validation time

save_top_k: -1

every_n_train_steps: 100 # ${trainer.max_steps}

意味があるかはわからんが、システム環境変数に以下を設定

TCNN_CUDA_ARCHITECTURES

86(RTX4090だと89?)

学習開始

C:\threestudio\load\images

に以下の画像をgirl_rgba.pngとしてを配置(画像はデルタもん様より)

AIベンチャー企業のBlendAI(ブランド名)はアルファパラダイスプロジェクト第一弾として、キャラクター「デルタもん」を発表しました。… pic.twitter.com/mcmG5LWuk2

— BlendAI (@BlendAIjp) January 19, 2024

python launch.py --config configs/stable-zero123.yaml --train --gpu 0 data.image_path=./load/images/girl_rgba.pngなかできた

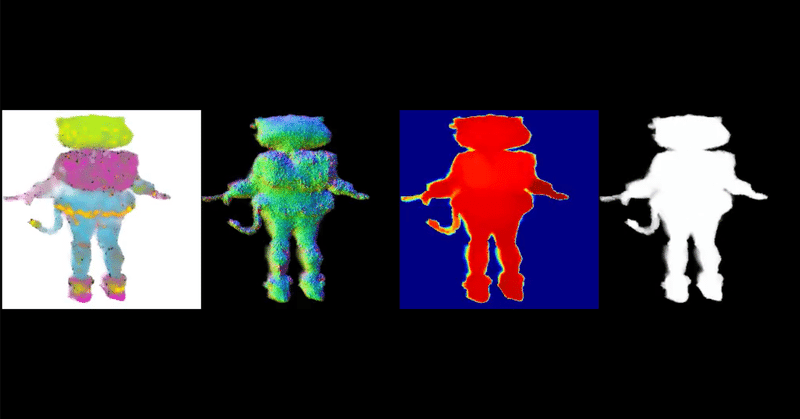

— とりにく (@tori29umai) January 23, 2024

デルタもん様より画像を使用 pic.twitter.com/SsXnp5xgSE

なんかできた。

エクスポート

日付でパスが変わるので書き換えてください

python launch.py ^

--config "outputs\zero123-sai\[64, 128, 256]_girl_rgba.png@20240124-073023\configs\parsed.yaml" ^

--export ^

--gpu 0 ^

resume="outputs\zero123-sai\[64, 128, 256]_girl_rgba.png@20240124-073023\ckpts\last.ckpt" ^

system.exporter_type=mesh-exporter ^

system.exporter.context_type=cudaしようとしたら動かなかったので

> 'python310.lib' を開けません。

的なエラーがでたので脳筋解決法として、

C:\Users\minam\AppData\Local\Programs\Python\Python310\libs

フォルダをまるごと

C:\threestudio\venv\Scripts

にコピー

したら実行できました。

なんでや!!!!!

なんかかわいそうなデルタもんが出力されました

完!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!

この記事が気に入ったらサポートをしてみませんか?